“Information will never replace illumination”

Susan Sontag

Artificial General Intelligence is a Red Herring · Sibylline.dev

In the blog post "Artificial General Intelligence is a Red Herring" on Sibylline.dev, the author argues that the pursuit of Artificial General Intelligence (AGI) is misguided and potentially wasteful. The piece critiques the vague definitions of AGI and the unrealistic expectations surrounding its development. Instead, the author advocates for a task-centric approach to AI, focusing on creating specialized tools that excel in specific domains rather than striving for a monolithic superintelligent model. The author emphasizes the importance of benchmarks and specialized models to achieve practical and measurable advancements in AI.

Key Points

Critique of AGI Pursuits:

The term AGI lacks a clear, universally accepted definition, ranging from "smarter than the average human at most things" to "smarter than the smartest humans at everything."

Achieving AGI, especially in its strongest definition, is probably unfeasible and not a practical goal.

Limitations of Current AI Approaches:

Current AI models, including reinforcement learning systems like AlphaGo, are specialized tools optimized for specific tasks rather than steps towards AGI.

The intelligence of AI models is inherently limited by the intelligence of their creators and the data they are trained on.

Exponential vs. Sigmoidal Growth:

Progress in AI is more likely to follow a sigmoidal (S-shaped) curve rather than an exponential one, meaning initial rapid progress will plateau as systems approach their capacity limits.

The "no free lunch theorem" (NFLT) implies that no single model can be best at all tasks, reinforcing the need for specialized models.

Task-Centric Approach:

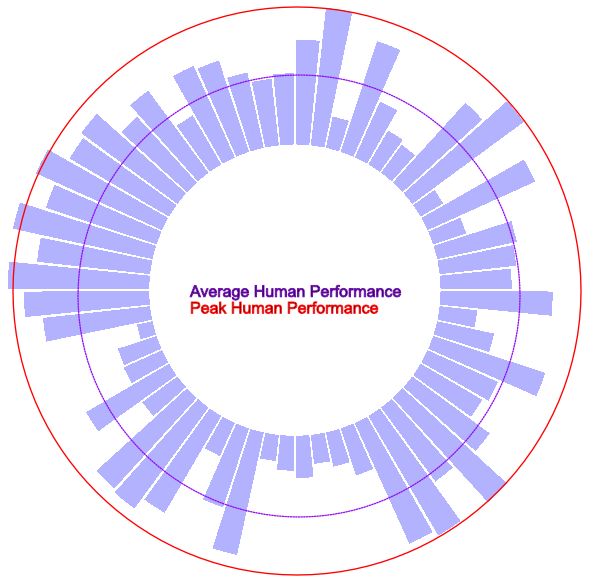

Instead of aiming for AGI, focus should be on how well models perform specific tasks compared to human performance.

Developing a suite of specialized AI tools for various tasks would be more efficient and practical.

The Path Forward

So, if we’re really serious about building AGI, what should we be doing today?

The first thing we need is an improved suite of truly exhaustive benchmarks. If our definition of AGI is “better than most humans at everything,” we can’t even make coherent statements about that until we have the ability to measure our progress, and a deep benchmark suite is the starting point. This will entail moving beyond simple logical problems to complex world simulating scenarios, adversarial challenges and gamification of a variety of common tasks.

Secondly, stop trying to train supermodels that know everything, pick a problem domain that AI isn’t good at yet and really hammer it with specialized models until we have tools that match or exceed the abilities of the best humans in that area. If you really want to push the boundaries of general foundation models, consider working on making them more efficient or easily tuneable/moddable. I also think there is a lot of low hanging fruit in transfer learning between languages, and any improvements there will make models better at coding and math as a side effect.

Finally, we need to design asynchronous agents that are capable of out of band thought/querying and online learning (in the optimization sense) and have been trained to identify sub-problems, select tools from a catalog to answer them, then synthesize the final answer from those partial answers. The agent could convert sub-problems into embeddings then use their positions in embedding space to select the models to process them with. I suspect this agent could be created with a multimodal model having a “thought loop” which can trigger actions based on the evolution of its objectives over time, with most actions being tool invocations producing output that further informs those objectives.

There is very little uncertainty in this path, no model regressions, no measurement problem and no need for supergenius engineers, only the steady ratcheting of human cultural progress. Beyond that, this method will put the whole “when will we have AGI” debate to bed because we’ll be able to track the coverage of tasks where AI is better than human, we’ll be able to track the creation of new tasks and the rate at which existing tasks fall to AI and use a simple machine learning predictor to get a solid estimate for the exact date when AI will be better than humans at “everything.”

Key Quotes

"People throw the term AGI around, but it lacks a clear definition that we can rally around."

"Reinforcement learning systems aren’t so much a path to AGI as specialized knowledge creation engines for domains with a succinctly describable objective."

"Exponential growth is impossible in any finite system - as the system approaches the limits of its capacity, growth becomes logarithmic."

"If there is a specialized tool for every known task a human might undertake that achieves near human optimal performance, the task of creating 'AGI' becomes the task of creating an agent that is just smart enough to select the right tools for the problem at hand."

Why It Matters

This blog post provides a critical perspective on the current trajectory of AI research and development, challenging the mainstream focus on AGI. By highlighting the practical limitations and inefficiencies of pursuing AGI, the author underscores the importance of a more grounded and task-specific approach to AI. This perspective is crucial for guiding the allocation of resources and efforts in AI research, promoting sustainable and measurable advancements. It encourages the development of specialized AI tools that can provide immediate and tangible benefits, rather than chasing the elusive and potentially unattainable goal of AGI.

AI isn't useless. But is it worth it? (citationneeded.news)

Molly White explores the utility and ethical implications of generative Artificial Intelligence (AI). Although she acknowledges that AI tools can be useful for specific tasks, she argues that the benefits do not justify the significant harms associated with their development and deployment. White parallels her views on AI and her skepticism towards blockchain technology, noting that both are often overhyped and misapplied. She critiques the inflated promises made by AI companies and highlights various ethical concerns, including environmental costs and abusive labor practices. White emphasizes that while AI can be handy for mundane tasks, it fails to generate novel ideas or replace skilled professionals. She also discusses the problematic nature of AI-generated content flooding the internet and questions whether the tasks AI excels at are even worth doing in the first place.

Key Points

Utility vs. Hype:

AI tools can be useful for specific, mundane tasks but are often overhyped by companies claiming they can replace skilled professionals or generate complex creative works.

Like blockchain technology, AI often fails to live up to its grandiose promises and has significant drawbacks.

Ethical Concerns:

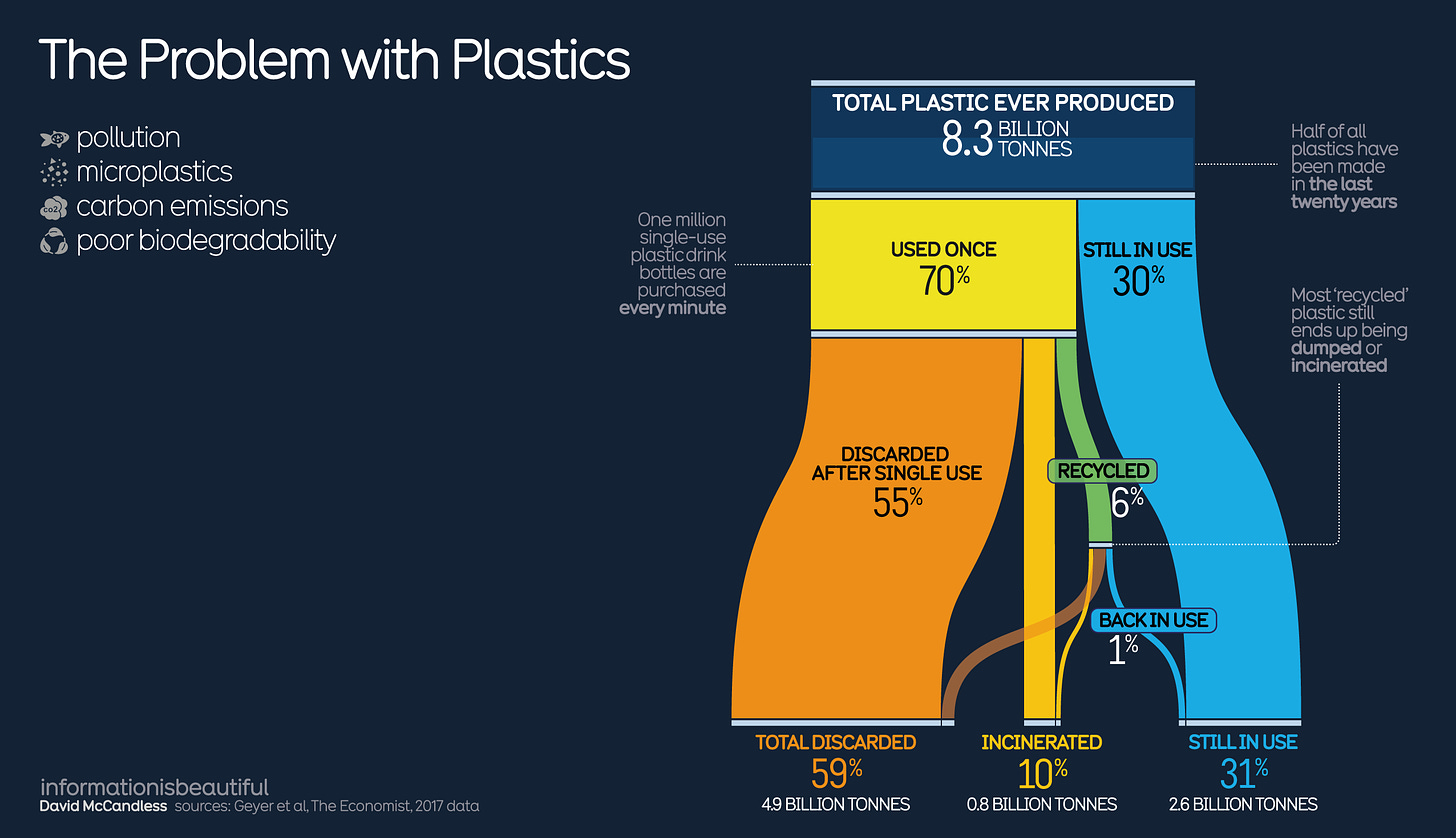

Development and training of large language models (LLMs) involve substantial environmental costs due to high energy consumption.

AI companies are frequently criticized for unethical labor practices.

Practical Use Cases:

AI is beneficial for simple tasks like proofreading, boilerplate code, and generating meeting notes.

Tools like GitHub's Copilot can assist with coding, although they have flaws, such as hallucinating imports or generating plausible but incorrect code.

Limitations of AI:

AI-generated content often lacks the nuance and originality of human-created work.

LLMs are prone to "hallucinations," producing errors that humans must carefully check.

Impact on Employment and Content Quality:

AI-generated content replaces human work in areas like journalism, often leading to lower-quality outputs.

The proliferation of AI-generated content contributes to the "enshittification" of the internet, where low-quality, high-volume text dominates.

Questioning Value:

White questions whether the tasks AI is good at are necessary or valuable, suggesting that some might be better off eliminated rather than automated.

Key Quotes

On AI’s Utility:

"AI can be kind of useful, but I'm not sure that a 'kind of useful' tool justifies the harm."

Comparison with Blockchain:

"When I boil it down, I find my feelings about AI are actually pretty similar to my feelings about blockchains: they do a poor job of much of what people try to do with them, they can't do the things their creators claim they one day might, and many of the things they are well suited to do may not be altogether that beneficial."

Ethical Concerns:

"The costs of these AI models are huge, and not just in terms of the billions of dollars of VC funds they're burning through at incredible speed."

On AI-generated Content:

"ChatGPT does not write, it generates text, and anyone who's spotted obviously LLM-generated content in the wild immediately knows the difference."

Impact on the Internet:

"If the internet's enshittification feels worse post-ChatGPT, it's because of the quantity and speed at which this junk is being produced, not because the junk is new."

Why It Matters

Molly White's critique is significant for several reasons:

Balanced Perspective on AI:

Her views provide a nuanced understanding that acknowledges AI's utility while rigorously questioning its broader impacts. This balance is crucial in a debate often polarized between AI enthusiasts and detractors.

Highlighting Ethical Issues:

White’s emphasis on the environmental and human costs of AI development brings attention to often-overlooked aspects of the technology, encouraging a more holistic evaluation of its benefits and drawbacks.

Impact on Employment and Content Quality:

Her discussion on replacing human jobs with AI and the resulting decline in content quality is a critical consideration for policymakers, businesses, and consumers.

Encouraging Critical Examination:

By questioning the true value of the tasks AI excels at, White prompts readers to think critically about the role of technology in our lives and whether it genuinely enhances productivity or merely adds to digital clutter.

Future of AI Development:

Her insights can inform the future direction of AI development, advocating for more responsible and ethical practices prioritizing genuine human benefit over corporate profits.

benefit over profit and hype.

Conclusion

Molly White's newsletter provides a comprehensive critique of AI's current state. It balances recognition of its practical uses with a strong emphasis on its ethical, environmental, and social challenges. Her analysis calls for critically evaluating AI's true value and ensuring that its development and deployment are aligned with broader societal good.

New GitHub Copilot Research Finds 'Downward Pressure on Code Quality' -- Visual Studio Magazine

The article from Visual Studio Magazine, titled "New GitHub Copilot Research Finds 'Downward Pressure on Code Quality,'" discusses recent findings on the impact of GitHub Copilot, an AI-powered coding assistant, on software development. The research conducted by GitClear highlights several adverse effects on code quality and maintainability. In contrast to previous studies that emphasized productivity gains with Copilot, GitClear's "Coding on Copilot" whitepaper suggests that AI-assisted code resembles the work of short-term contractors rather than seasoned developers, leading to increased code churn and duplicated code.

Key Points

Research Focus and Findings:

GitClear's research investigated the quality and maintainability of AI-assisted code.

The study found that AI-generated code is more prone to issues and less maintainable than human-written code.

Key metrics significantly increased code churn and copy/pasted code.

Key Metrics and Trends:

Code Churn: Projected to double in 2024 compared to the pre-AI baseline 2021.

Copy/Pasted Code: An increased proportion of code is repeated rather than refactored.

Moved Code: Decline in code refactoring and reuse, indicating a lack of adherence to the DRY (Don't Repeat Yourself) principle.

Comparison with Previous Studies:

GitHub's 2022 study highlighted productivity gains and faster task completion (55% faster) with Copilot.

GitClear's study focuses on the composition and maintainability of code, showing more negative effects.

Implications for Technical Leaders:

Leaders need to be aware of the increased code churn and the potential for future maintenance headaches due to duplicated code.

There is a need to balance productivity gains with long-term code quality and maintainability.

Future Outlook:

The research raises questions about the long-term impact of AI on software development and the responsibilities for maintaining AI-generated code.

Key Quotes

GitClear Whitepaper Abstract:

"We find disconcerting trends for maintainability. Code churn -- the percentage of lines that are reverted or updated less than two weeks after being authored -- is projected to double in 2024 compared to its 2021, pre-AI baseline."

On Code Churn:

"The bottom line is that 'using Copilot' is strongly correlated with 'mistake code' being pushed to the repo."

On Copy/Pasted Code:

"There is perhaps no greater scourge to long-term code maintainability than copy/pasted code."

Conclusion of the Paper:

"How will Copilot transform what it means to be a developer? There's no question that, as AI has surged in popularity, we have entered an era where code lines are being added faster than ever before. The better question for 2024: who's on the hook to clean up the mess afterward?"

Why It Matters

The findings from GitClear's research are critical for several reasons:

Long-Term Code Quality: The increase in code churn and copy/pasted code suggests that while AI tools like Copilot can boost productivity, they may compromise the long-term quality and maintainability of the codebase.

Developer Practices: The decline in refactoring and reusing code underscores the need for developers to remain vigilant about best practices, even when using AI assistance.

Technical Leadership: Software development leaders must balance the immediate productivity gains provided by AI tools with the potential for increased maintenance costs and technical debt.

Future of AI in Development: The research invites a broader conversation about the role of AI in software development, particularly regarding the responsibilities and strategies for managing AI-generated code.

The study highlights the importance of a nuanced approach to integrating AI tools into development workflows, ensuring that the benefits do not overshadow critical aspects of software engineering.

How Perplexity builds product - by Lenny Rachitsky (lennysnewsletter.com)

Lenny Rachitsky's article, "How Perplexity builds product," discusses the unique strategies and methodologies employed by Perplexity, an AI-driven company that has rapidly grown to challenge giants like Google and OpenAI in the search industry. The piece features insights from Johnny Ho, Perplexity’s co-founder and head of product, covering how the company leverages AI throughout its processes, organizes its teams, and maintains a high hiring bar to sustain its innovative edge.

Key Points

AI Integration: Perplexity utilizes AI at every step of the company-building process, from product management to HR, encouraging employees to consult AI before turning to colleagues.

Team Structure: The company minimizes coordination costs by structuring teams like slime mold, allowing them to parallelize projects and function with minimal management overhead.

Small, Autonomous Teams: Typical teams consist of two to three people, with some projects handled by a single individual, promoting self-driven work and reducing the need for extensive managerial oversight.

High Hiring Standards: Perplexity prioritizes flexibility, initiative, and the ability to work effectively in resource-constrained environments. They avoid hiring those who specialize solely in managing processes or leading people.

Future of Product Roles: Johnny Ho predicts that technical PMs or engineers with product taste will become increasingly valuable, given the rise of AI and its impact on reducing the need for traditional management skills.

Quarterly Planning: The company operates on a flexible quarterly planning system, allowing them to adapt quickly to rapid changes in the AI landscape.

OKRs and Data-Driven Goals: Perplexity uses rigorous, measurable objectives in its quarterly planning, aiming for aggressive targets and using unmet goals to identify gaps in prioritization and staffing needs.

Decentralized Decision-Making: Projects are driven by a single Directly Responsible Individual (DRI) with execution steps broken down into parallel tasks to minimize coordination issues.

Flat Organization: The company maintains a flat structure, with reporting lines designed to support top-level goals rather than dictate priorities.

Task Management Tools: Perplexity uses Linear for task management and bug tracking, and Notion for storing sources of truth like roadmaps and documentation.

Key Quotes

Johnny Ho on AI Usage: "We’d start everything by asking AI, ‘What is X?’ and then ‘How do we do X properly?’"

On Team Structure: "My goal is to structure teams around minimizing ‘coordination headwind,’ as described by Alex Komoroske in this deck on seeing organizations as slime mold."

On Hiring Philosophy: "Given the pace we are working at, we look foremost for flexibility and initiative. The ability to build constructively in a limited-resource environment (potentially having to wear several hats) is the most important to us."

Future of Product Roles: "If I had to guess, technical PMs or engineers with product taste will become the most valuable people at a company over time."

Why It Matters

This article provides a blueprint for modern, AI-driven product development and organizational structure, highlighting how a small, nimble team can compete with industry giants. Perplexity’s approach showcases the potential of AI to streamline operations, reduce the need for traditional management roles, and foster rapid innovation. By prioritizing flexibility, initiative, and a decentralized decision-making process, Perplexity exemplifies a forward-thinking model that other companies can learn from as they navigate the evolving tech landscape.

The Bitter Lesson (incompleteideas.net)

In "The Bitter Lesson," Rich Sutton argues that the most significant insight from 70 years of AI research is that general methods leveraging vast amounts of computation, such as search and learning, are ultimately far more effective than approaches that incorporate human knowledge. Sutton attributes this to the exponential growth in available computation power, as predicted by Moore's Law. He illustrates his point with examples from computer chess, Go, speech recognition, and computer vision, showing how initial efforts to embed human expertise into AI systems were eventually outperformed by methods that exploited computational power. Sutton concludes that AI research should focus on scalable, general-purpose methods rather than attempting to mimic human cognitive processes.

Key Points

General Methods and Computation:

General methods that leverage computation are significantly more effective over time.

The continual increase in computational power (Moore’s Law) outpaces the usefulness of human knowledge in improving AI systems.

Historical Examples:

Computer Chess: Early efforts focused on human-like understanding of chess, but brute-force search methods ultimately defeated world champion Kasparov in 1997.

Computer Go: Human knowledge-based approaches failed, and success came with search and learning methods, highlighted by AlphaGo.

Speech Recognition: Transition from human-knowledge-based methods to statistical methods like HMMs, and later deep learning, led to significant improvements.

Computer Vision: Early methods based on human-like understanding of vision were replaced by deep learning approaches that rely on convolution and invariances.

The Bitter Lesson:

AI researchers often try to build human knowledge into systems, which helps in the short term but inhibits long-term progress.

True breakthroughs come from methods that scale with increased computation, such as search and learning.

Complexity of Minds:

The contents of human minds are irredeemably complex and should not be directly built into AI systems.

AI should focus on meta-methods that can discover and approximate this complexity rather than embedding specific human discoveries.

Scalability:

Search and learning are the methods that scale effectively with increased computational power.

These methods enable AI systems to handle arbitrary complexity and adapt to new information.

Key Quotes

"The biggest lesson that can be read from 70 years of AI research is that general methods that leverage computation are ultimately the most effective, and by a large margin."

"The only thing that matters in the long run is the leveraging of computation."

"The journey of AI research shows that human-knowledge-based methods plateau and inhibit further progress."

"We want AI agents that can discover like we can, not which contain what we have discovered."

Why It Matters

"The Bitter Lesson" is pivotal for understanding the trajectory of AI research and development. It emphasizes the importance of focusing on scalable, computation-based methods rather than trying to replicate human cognitive processes. This insight is crucial for guiding future AI research, ensuring that efforts are directed towards approaches that will remain effective as computational power continues to grow. By learning from past mistakes and successes, AI researchers can develop more robust, adaptable, and powerful AI systems that leverage the full potential of modern computational capabilities.

A Better Lesson – Rodney Brooks Counterargument to “The Bitter Lesson”

In the blog post titled "A Better Lesson," Rodney Brooks critiques Rich Sutton's post "The Bitter Lesson," which argues that increasing computational power and minimizing built-in knowledge has historically been the most successful approach in AI development. Brooks counters this argument by emphasizing the importance of human ingenuity and the practical limitations of relying solely on computation. He discusses the inherent inefficiencies and unsustainable nature of current AI methodologies, advocating for a balanced approach that combines human expertise with computational power.

Key Points

Critique of Sutton's Viewpoint:

Sutton suggests that the most successful AI systems rely on more computation and less built-in knowledge.

Brooks argues that this approach overlooks the importance of human-designed structures in AI systems.

Importance of Human-Designed Structures:

Brooks highlights that Convolutional Neural Networks (CNNs) are successful partly because their front-end is designed by humans to handle translational invariance.

He argues that completely eliminating human-designed structures would drastically increase computational costs.

Limitations of Current AI Systems:

Current AI systems, like CNNs, struggle with tasks such as color constancy, leading to errors that humans would not make (e.g., misidentifying traffic signs).

The necessity of massive data sets for training is impractical and unsustainable.

Human Involvement in AI Development:

Despite claims of minimizing human involvement, human ingenuity is still crucial in designing network architectures and training regimes.

The shift towards requiring humans to create large training sets is seen as a form of sleight of hand.

Sustainability and Practicality:

The computational power required for training large AI systems is only affordable for large corporations, not individuals or smaller institutions.

The energy consumption and carbon footprint of these systems are significant concerns.

Hardware Limitations:

Moore's Law is slowing down, making it impractical to rely solely on increasing computational power.

Specialized hardware for AI tasks locks in specific solutions and requires human intelligence to design.

Brooks' Proposed Lesson:

The better lesson from AI history is to consider the total cost of solutions, including human ingenuity and sustainability.

A balanced approach that integrates human-designed knowledge with computational power is more practical and efficient.

Key Quotes

"The very essence of CNNs is that the front end of the network is designed by humans to manage translational invariance."

"Massive data sets are not at all what humans need to learn things so something is missing."

"Saying that a particular solution style minimizes a particular sort of human ingenuity that is needed while not taking into account all the other places that it forces human ingenuity (and carbon footprint) to be expended is a terribly myopic view of the world."

Why It Matters

Rodney Brooks' critique is significant because it addresses fundamental issues in the current trajectory of AI development. By emphasizing the limitations and unsustainable aspects of relying solely on computational power, Brooks advocates for a more balanced and practical approach. His insights highlight the need for integrating human expertise into AI systems, ensuring that the development of AI is both efficient and sustainable. This perspective is crucial for guiding future AI research and development, ensuring that it remains accessible and beneficial to a broader range of stakeholders.