“Those who cannot remember the past are condemned to repeat it.”

George Santayana

Parkinson's Law: It's Real, So Use It - The Engineering Manager

The article discusses Parkinson's Law, which states that "work expands so as to fill the time available for its completion." James Stanier explains that this phenomenon is counter-intuitive but true in practice. Without deadlines, projects tend to take longer and may suffer from feature creep and scope bloat. By setting challenging but realistic deadlines, better results can be achieved. Stanier introduces the concept of the Iron Triangle, which consists of scope, resources, and time, and emphasizes that changing one of these elements affects the others.

The article argues that deadlines create urgency and drive progress, preventing the natural tendency for tasks to expand indefinitely. Stanier acknowledges that poorly set deadlines can lead to subpar work but maintains that properly applied, deadlines foster innovation and efficiency. He shares personal experiences of writing books and managing software projects, highlighting that external accountability and clear deadlines help maintain momentum and make decisive progress.

Stanier advises leaders to set explicit deadlines and be open to negotiation, as this simple technique can significantly improve productivity. He recommends implementing a weekly reporting cadence to create a routine of planning, executing, and reporting progress, which redefines how teams perceive and accomplish their work.

Key Points

Parkinson's Law: Work expands to fill the time available for its completion.

Iron Triangle: The three constraints of a project are scope, resources, and time. Changing one affects the others.

Importance of Deadlines:

Deadlines create urgency and drive progress.

Without deadlines, projects suffer from feature creep and scope bloat.

Properly applied deadlines lead to better results.

Application of Deadlines:

Challenging but realistic deadlines foster innovation and efficiency.

External accountability helps maintain momentum.

Clear deadlines force decisive actions and progress.

Weekly Reporting Cadence:

Teams should plan, execute, and report progress weekly.

This routine reshapes how teams perceive and complete their work.

Leadership Advice:

Set explicit deadlines and be open to negotiation.

Use deadlines to create a clear tempo and cadence.

Properly wielded deadlines are a powerful tool for effective leadership.

Key Quotes

"Work expands so as to fill the time available for its completion."

"By setting challenging deadlines you will actually get better results."

"Deadlines force a clear tempo and cadence and, fundamentally, they make things happen."

"When wielded with grace, good intentions, and knowledge of what gets humans moving and feeling good, deadlines are a powerful tool."

"The only way that I’ve written books is because I set myself a challenging, but not impossible, schedule with the publisher."

Why It Matters

Understanding and applying Parkinson's Law is crucial for effective time management and productivity in both personal and professional contexts. The article highlights the importance of deadlines in preventing project delays and inefficiencies. By setting and adhering to clear deadlines, leaders can drive progress, foster innovation, and achieve better outcomes. This concept is particularly relevant in large organizations where the natural tendency for work to expand can lead to significant inefficiencies. By implementing the strategies discussed, leaders can create a more disciplined and productive work environment, ultimately leading to more successful projects and a more efficient organization.

Dark Forest theory: A terrifying explanation of why we haven’t heard from aliens yet - Big Think

The article on Big Think explores the "Dark Forest Theory," a terrifying hypothesis that attempts to explain why humanity has not yet detected any signs of extraterrestrial life despite the vastness of the cosmos and the high probability of other civilizations existing. The theory, popularized by Liu Cixin's science fiction novel The Dark Forest, posits that advanced civilizations are deliberately avoiding detection because they fear being annihilated by other, equally paranoid civilizations. The theory is framed as a solution to the Fermi Paradox, which questions why we haven't encountered any evidence of alien life despite the high likelihood of its existence.

Key Points

The Drake Equation and Fermi Paradox:

The Drake Equation suggests there should be numerous advanced civilizations in our galaxy.

The Fermi Paradox arises from the contradiction between these high probabilities and the lack of evidence for alien life.

Traditional Explanations:

Previous solutions to the Fermi Paradox include the rarity of life, the difficulty of developing intelligence, and the likelihood of civilizations self-destructing.

Introduction to Dark Forest Theory:

The theory posits that civilizations stay silent to avoid detection.

Central premise: All life desires to stay alive, and without assurances of peaceful intentions, the safest action is preemptive annihilation of others.

Literary Basis:

Liu Cixin's The Dark Forest novel encapsulates the theory with the metaphor of the universe as a "dark forest" filled with silent, armed hunters.

Game Theory and Paranoia:

The theory is likened to the prisoner’s dilemma, where the safest strategy is to remain hidden and avoid contact.

Scientific Consideration:

Scientist David Brin suggested a similar idea where robotic probes might enforce this silent equilibrium in the galaxy.

The theory aligns with scientific principles without needing to alter the Drake Equation variables significantly.

Implications for Humanity:

Humanity has been broadcasting its existence for nearly a century, potentially revealing our location to any listening civilizations.

If the theory holds, our broadcasts could be dangerous, as they might attract hostile attention.

Key Quotes

Metaphor from The Dark Forest novel:

"The universe is a dark forest. Every civilization is an armed hunter stalking through the trees like a ghost... There’s only one thing he can do: open fire and eliminate them."

David Brin on the theory:

“It is consistent with all of the facts and philosophical principles described in the first part of this article. There is no need to struggle to suppress the elements of the Drake equation in order to explain the Great Silence.”

Why It Matters

The Dark Forest Theory offers a chilling yet plausible explanation for the Great Silence observed in the cosmos. It challenges the optimistic view of contacting extraterrestrial life and suggests that such contact could be inherently dangerous. This theory underscores the importance of considering the potential risks of broadcasting our presence to the universe and might influence future strategies in the search for extraterrestrial intelligence (SETI). By proposing that advanced civilizations might be avoiding detection to ensure their survival, the theory shifts the narrative from a hopeful search for alien neighbors to a more cautious and strategic approach to space exploration and communication.

Expeditions to AI Land · Relax. Don't worry about AI Tech Adoption. (weblog.lol)

The article "Relax. Don't Worry About AI Tech Adoption" by Georg Zoeller discusses the rapid advancements in AI technology and the overwhelming pressure many feel to keep up with these changes. Zoeller highlights the incredible pace of AI development and its implications, noting that the breakneck speed of progress can make it difficult for individuals and businesses to stay current. He argues against the impulse to immediately adopt every new AI tool or technology, suggesting instead that a more measured, strategic approach is necessary. The article emphasizes the importance of AI literacy and understanding the broader trends and impacts of AI before diving into adoption.

Key Points

Rapid AI Advancements:

AI technology has seen significant progress this year, with major improvements in performance, efficiency, and capability.

Various new AI tools and frameworks like RAG, LangChain, and others are flooding the market.

Pressure to Keep Up:

There's a constant barrage of information and marketing urging individuals and companies to learn and adopt new AI technologies.

This creates a sense of urgency and fear of falling behind.

The Trap of Immediate Adoption:

Zoeller warns against the impulse to quickly adopt new technologies without fully understanding them.

He likens this to "Premature Optimisation" in software engineering, where committing too early can lead to suboptimal outcomes.

Different Nature of Current AI Progress:

The current AI boom is likened to a Cambrian explosion, with rapid, unprecedented advancements.

Technologies and capabilities are evolving so quickly that what seems cutting-edge today might become obsolete in a few months.

Strategic AI Adoption:

Zoeller advocates for a strategic approach to AI adoption, focusing on understanding the technology and its implications before implementation.

He suggests building a broad AI literacy, establishing a strategic position, and then moving towards AI transformation with intent.

Challenges and Considerations:

Many AI technologies are still unreliable for business applications and come with legal, ethical, and operational challenges.

Businesses should be wary of jumping on the hype train without a solid understanding of these issues.

AI Literacy and Strategic Planning:

Understanding the fundamentals of AI and its potential impacts is crucial.

Strategic planning and literacy can help businesses navigate the rapid changes and avoid pitfalls.

Key Quotes

On the impulse to adopt new AI tech:

"Action gives us that feeling of agency in a world that increasingly moves at uncomfortable pace, creates a bubble around us that makes us feel powerful and in control. It's also a trap."

On the nature of current AI progress:

"We snapped from the leisurely pace of the familiar x86 curve of Moore's law onto the wild ascent that's Huang's Law governing this technology, flying at 2000 papers a week."

On the need for strategic adoption:

"Strategy > Tactics. Understanding > Action. AI Literacy > AI Transformation."

Why It Matters

This article is significant because it provides a counter-narrative to the prevalent fear and urgency surrounding AI adoption. By advocating for a strategic, measured approach, Zoeller emphasizes the importance of understanding AI technology deeply before implementation. This perspective is crucial for businesses and individuals to avoid the pitfalls of premature adoption and to harness AI's potential effectively. The focus on AI literacy and strategic planning can help decision-makers navigate the rapid technological changes and make informed choices that align with long-term objectives.

Understanding the Y2038 Problem

The Y2038 problem, also known as the Year 2038 or Unix time overflow problem, is a significant issue for systems that use a 32-bit representation of time. Here’s a detailed breakdown of its scope and implications:

What is the Y2038 Problem?

The problem arises because many computer systems and software applications store time as the number of seconds since the Unix epoch (00:00:00 UTC on 1 January 1970) using a 32-bit signed integer. The maximum value for a 32-bit signed integer is 2,147,483,647. On 19 January 2038 at 03:14:07 UTC, this value will be exceeded, causing an integer overflow. Consequently, the time value will wrap around and be interpreted as a negative number, leading to incorrect system times being displayed as 13 December 1901.

Scope and Impact

Embedded Systems:

Legacy Devices: Many older embedded systems, such as industrial control systems, medical devices, and consumer electronics, still use 32-bit time representations. These systems might not be easily upgradable or replaceable.

Critical Infrastructure: Systems that control critical infrastructure, including power grids, transportation systems, and communication networks, could be affected if they rely on 32-bit time.

Software Applications:

Operating Systems: Older versions of Unix-based operating systems, including some Linux distributions, BSD variants, and proprietary Unix systems, may be affected if they use 32-bit time representations.

Databases: Some database systems store timestamps in a 32-bit format, leading to potential data corruption or incorrect time calculations.

Programming Languages: Software written in languages like C and C++ that use the

time_ttype for time representation may encounter issues.

Financial Systems:

Banking and Trading Platforms: Accurate timekeeping is crucial for transaction processing and auditing. An overflow could lead to erroneous timestamps on financial transactions, potentially causing significant disruptions.

Telecommunications:

Network Protocols: Protocols that rely on precise time synchronization, such as NTP (Network Time Protocol), could be disrupted, leading to issues in data transmission and network coordination.

Mitigation Efforts

Transition to 64-bit Systems:

Hardware and Software Upgrades: Transitioning to 64-bit systems, which use a 64-bit integer for time representation, can effectively mitigate the Y2038 problem. A 64-bit time representation can store dates for billions of years into the future.

Operating System Updates: Modern operating systems, including recent versions of Linux, macOS, and Windows, already use 64-bit time representations or provide support for them.

Code Refactoring:

Updating Legacy Code: Refactoring legacy software to use 64-bit time representations or alternative timekeeping methods can prevent overflows.

Testing and Validation: Comprehensive testing and validation are necessary to ensure that systems function correctly after updates.

Awareness and Preparedness:

Industry Awareness: Raising awareness among industry stakeholders about the potential impacts of the Y2038 problem is crucial.

Preparedness Plans: Developing and implementing preparedness plans to address the problem before it occurs is essential for minimizing disruptions.

Conclusion

The Y2038 problem is a significant issue that could affect various systems across different industries. While the transition to 64-bit systems and time representations offers a solution, the challenge lies in upgrading or replacing legacy systems that are still in use. Proactive mitigation efforts, including software updates, hardware upgrades, and industry awareness, are crucial to addressing this problem before it manifests in 2038.

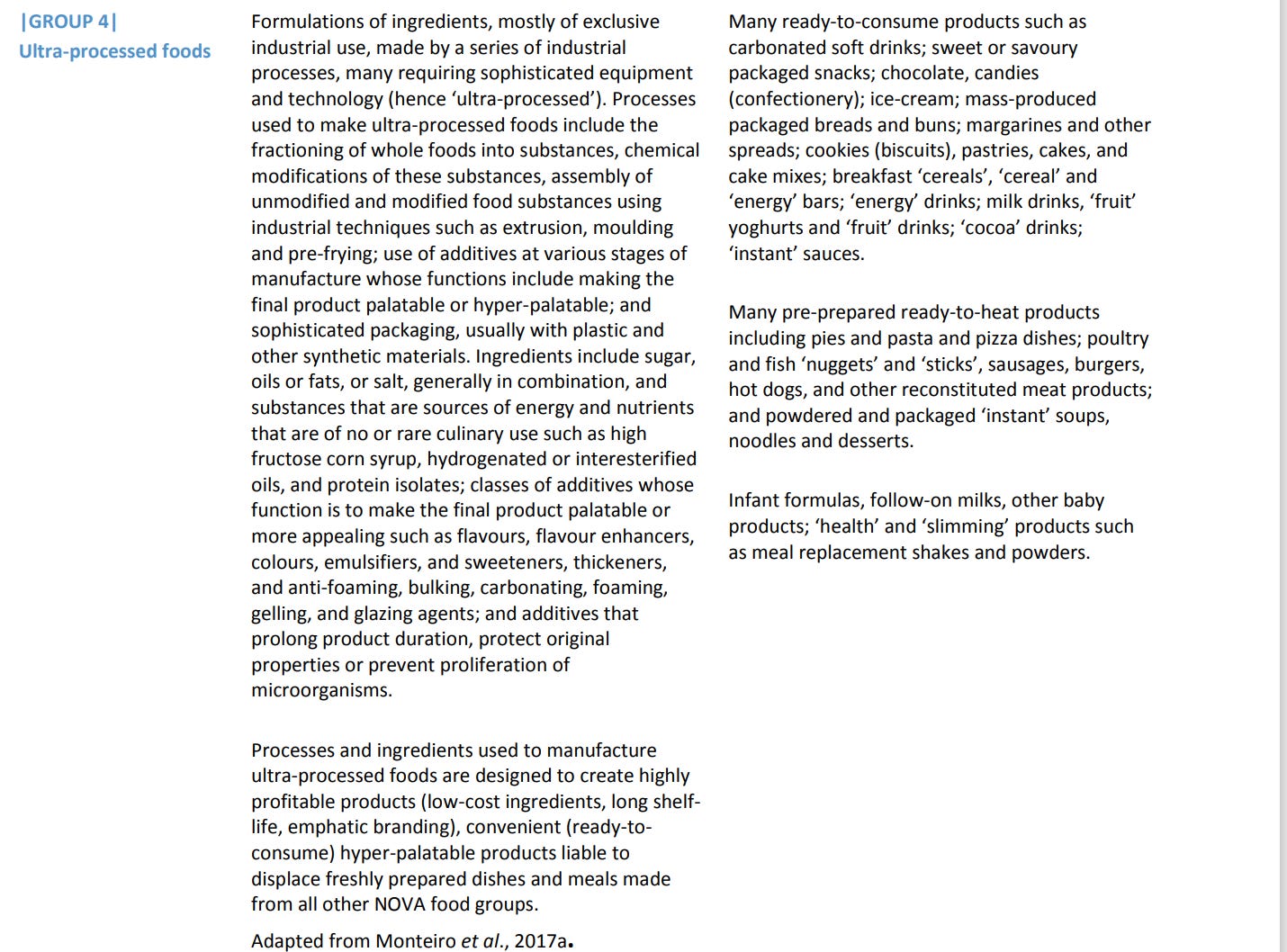

Ultra-processed foods, diet quality, and health using the NOVA classification system

The document, "Ultra-processed foods, diet quality, and health using the NOVA classification system", prepared by Carlos Augusto Monteiro, Geoffrey Cannon, Mark Lawrence, Maria Laura da Costa Louzada, and Priscila Pereira Machado for the Food and Agriculture Organization of the United Nations (FAO) in 2019, examines the impact of ultra-processed foods on diet quality and health. The report utilizes the NOVA classification system, which categorizes foods based on the extent and purpose of their processing. The main focus is on ultra-processed foods, their nutritional quality, and their association with non-communicable diseases. It aims to provide guidance on the inclusion of information about processed foods in dietary guidelines and public policies.

Key Points

NOVA Classification System:

The NOVA system classifies all foods into four groups based on the nature, extent, and purpose of industrial processing.

Group 1: Unprocessed or minimally processed foods.

Group 2: Processed culinary ingredients.

Group 3: Processed foods.

Group 4: Ultra-processed foods.

Ultra-processed Foods:

These are formulations predominantly or entirely made from substances extracted from foods, derived from food constituents, or synthesized in laboratories.

They are highly convenient and attractive due to their long shelf-life and hyper-palatability, often achieved through the use of additives.

Health Implications:

Ultra-processed foods tend to be nutritionally unbalanced, leading to over-consumption and the displacement of healthier food groups.

High consumption of ultra-processed foods is linked to an increased risk of obesity and various non-communicable diseases.

Dietary Guidelines and Public Policies:

The report criticizes traditional dietary guidelines for not adequately addressing the role of food processing.

It emphasizes the need for guidelines to consider the extent and purpose of food processing to better inform public health strategies.

Global Trends:

Ultra-processed foods constitute a significant portion of the diet in high-income countries and are rapidly increasing in middle-income countries.

Key Quotes

On the significance of processing:

"The significance of industrial processing, and in particular techniques and ingredients developed or created by modern food science and technology, on the nature of food and on the state of human health, is generally understated."

On the NOVA system and ultra-processed foods:

"NOVA classifies all foods into four groups... Ultra-processed foods are made up of snacks, drinks, ready meals, and many other product types formulated mostly or entirely from substances extracted from foods or derived from food constituents."

On the health impact of ultra-processed foods:

"Ultra-processed foods are typically nutritionally unbalanced and liable to be over-consumed and to displace all three other NOVA food groups."

On dietary guidelines:

"National dietary guidelines… do not address how types of processing affect the nature and quality of foods."

On global consumption trends:

"Ultra-processed foods now amount to around or even more than half of the total dietary energy consumed in high-income countries."

Why It Matters

Understanding the impact of ultra-processed foods is crucial for improving public health. The NOVA classification system provides a framework for identifying and categorizing foods based on their processing levels, which can help in formulating more effective dietary guidelines and public health policies. By recognizing the detrimental effects of ultra-processed foods, policymakers can better address issues related to obesity and non-communicable diseases, ultimately leading to healthier populations. The document underscores the importance of considering food processing in dietary recommendations and the need for a global shift towards more balanced and less processed dietary patterns.

How Many Steps Do You Really Need? There’s Good News for People Over 60. (msn.com)

The article "How Many Steps Do You Really Need? There’s Good News for People Over 60" on MSN discusses the findings of recent studies regarding the optimal number of daily steps for older adults to maintain health. Contrary to the popular belief that 10,000 steps per day are necessary, the research indicates that fewer steps can still significantly benefit people over 60.

Key Points

Reduced Step Requirement:

Studies suggest that older adults can experience substantial health benefits with fewer than 10,000 steps per day.

The optimal range for people over 60 is around 6,000 to 8,000 steps daily.

Health Benefits:

Regular walking at this step count can improve cardiovascular health, reduce the risk of chronic diseases, and enhance overall well-being.

Even moderate increases in physical activity can lead to significant health improvements.

Practical Recommendations:

Encouraging older adults to focus on consistent, moderate activity rather than striving for high step counts.

Emphasizing the importance of integrating walking into daily routines in manageable increments.

Key Quotes

On step count findings: "New research shows that older adults can see significant health benefits with fewer steps than previously thought."

Health impact: "For people over 60, walking between 6,000 and 8,000 steps a day can improve health outcomes significantly."

Why It Matters

Accessibility: The findings make the goal of maintaining good health more accessible for older adults who may find the 10,000-step target daunting.

Public Health: This information can help shape public health recommendations and encourage more older adults to engage in regular physical activity.

Quality of Life: By understanding that fewer steps can still yield health benefits, older adults can focus on achievable fitness goals, potentially leading to better adherence to regular exercise routines and improved quality of life.

How to Build an AI Data Center - by Brian Potter (construction-physics.com)

Brian Potter's article, "How to Build an AI Data Center," from the Institute for Progress series "Compute in America," explores the physical infrastructure necessary to support the burgeoning field of AI. The piece highlights the increasing demand for data centers due to the computational needs of AI, particularly for training large models like OpenAI's GPT-4. The article discusses the complexities of constructing and operating these data centers, focusing on their power requirements, cooling systems, efficiency improvements, and the trend towards larger facilities.

Key Points

Physical Infrastructure for AI:

AI development requires significant computational power, necessitating extensive physical infrastructure.

Large AI models like GPT-4 demand enormous amounts of computation, which cannot be supported by consumer-grade devices.

Investment in Data Centers:

Major companies are heavily investing in data centers to meet AI demands:

Amazon plans to spend $150 billion over 15 years.

Meta has allocated $37 billion for 2024 alone.

Coreweave is building 28 data centers in 2024.

Power Constraints:

Power availability is a critical factor, with many utilities naming data centers as their primary source of customer growth.

Data centers consume vast amounts of electricity, comparable to small cities or industrial processes.

Efficiency Improvements:

Advances in data center design and technology have significantly improved energy efficiency:

Power Usage Effectiveness (PUE) has improved from around 2.5 in 2007 to about 1.5 on average today.

Hyperscalers like Meta and Google achieve even lower PUEs (around 1.1).

Cooling Systems:

Data centers use sophisticated cooling systems to manage the heat generated by thousands of computers.

Techniques include hot aisle/cold aisle arrangements and multi-loop cooling systems.

Reliability and Redundancy:

Data centers are designed for high reliability, often using redundant systems to ensure minimal downtime.

Future Trends:

Data centers are growing larger, with some campuses reaching gigawatt-scale power demands.

Despite efficiency gains, the overall power consumption of data centers is expected to rise significantly.

Regulatory and Infrastructure Challenges:

Some regions have imposed moratoriums or restrictions on new data center construction due to power constraints.

The U.S. faces challenges in expanding electrical infrastructure to meet growing demand.

Key Quotes

"Training OpenAI’s GPT-4 required an estimated 21 billion petaFLOP."

"Amazon plans on spending $150 billion on data centers over the next 15 years in anticipation of increased demand from AI."

"Data centers consume large amounts of power... Today, large data centers can require 100 megawatts of power or more."

"Power availability has already become a key bottleneck to building new data centers."

Why It Matters

Economic Growth: The expansion of AI capabilities promises significant economic benefits, but it hinges on the availability of robust physical infrastructure.

National Security: Advanced AI systems could play a crucial role in national security, making leadership in AI infrastructure a strategic priority.

Energy Consumption: The rapid growth of data centers highlights the need for sustainable energy solutions and efficient power management.

Technological Progress: Continued innovation in data center design and efficiency is essential to support the next generation of AI advancements.

Regulatory Impact: Understanding the regulatory and infrastructure challenges is crucial for policymakers and industry leaders to ensure the sustainable growth of AI infrastructure.